The vOICe for Android

Contains ads

4.3star

1.63K reviews

Everyone

info

500K+

Downloads

Everyone

Learn moreAbout this app

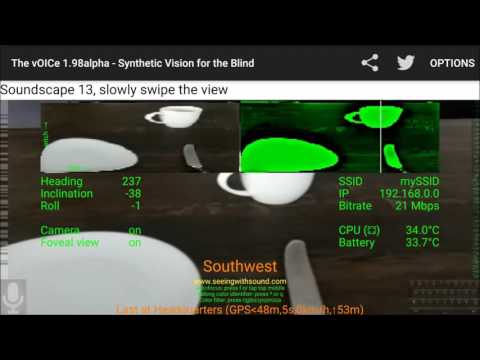

See with your ears! The vOICe for Android maps live camera views to soundscapes, offering augmented reality and unprecedented visual detail for the totally blind through sensory substitution and computer vision. Also includes live talking OCR, a talking color identifier, talking compass, talking face detector and a talking GPS locator, while Microsoft Seeing AI and Google Lookout object recognition can be launched from The vOICe for Android by tapping the left or right screen edge.

Is it an augmented reality game or a serious tool? It can be both, depending on what you want it to be! The ultimate goal is to provide a form of synthetic vision to the blind, but sighted users can simply have fun playing the game of sight-without-eyesight. Visually impaired users with severe tunnel vision can try if the auditory feedback helps them notice changes in the visual periphery. The vOICe for Android runs on smartphones and tablets, but is also compatible with most smart glasses, using the tiny camera in these glasses and a special user interface to generate a live sonic augmented reality overlay, hands-free! You may want to use an external battery connected via USB cable to keep the smart glasses battery from draining too quickly. You can help us by blogging and tweeting about your experiences, your use cases, and about how *you* learn to see with sound.

How does it work? The vOICe uses pitch for height and loudness for brightness in one-second left to right scans of any view: a rising bright line sounds as a rising tone, a bright spot as a beep, a bright filled rectangle as a noise burst, a vertical grid as a rhythm. Best used with stereo headphones for the most immersive experience and most detailed auditory resolution.

Just experiment with simple visual patterns first, because real-life imagery is extremely complex. Randomly drop a bright item such as a DUPLO brick on a dark table top, and learn to reach for it through sound alone (close your eyes if you have eyesight). Next try and explore your own safe home environment, and learn to associate the complex sound patterns with what you already know is there. Sighted users can also use the app with Google Cardboard compatible devices through a swipe-down on the main screen to toggle the binocular view.

For serious users: learning to see with sound is like learning a foreign language or learning to play a musical instrument, really challenging your perseverance and brain plasticity. It may well be the ultimate brain training system, bridging the senses through artificial synesthesia. A general training manual for The vOICe (not specific to the Android version) is available online at

https://www.seeingwithsound.com/manual/The_vOICe_Training_Manual.htm

and usage notes for running The vOICe for Android hands-free on smart glasses are at

https://www.seeingwithsound.com/android-glasses.htm

Do not worry about the many options of The vOICe for Android: human eyes have no buttons or options, and The vOICe is similarly designed to perform its main function out-of-the-box, so you do not have to use any options to get going. Some of the most common options appear as you slowly slide your finger across the main screen.

Why is The vOICe free? Because our foremost goal is to make a real change by lowering barriers to use as much as we can. You will find that competing technologies cost upwards of $10,000 and yet have lower specs. The perceptual resolution offered by The vOICe is unmatched even by $150,000 "bionic eye" retinal implants (PLoS ONE 7(3): e33136).

The vOICe for Android supports English, Dutch, German, French, Spanish, Italian, Estonian, Hungarian, Polish, Slovak, Turkish, Russian, Chinese, Korean and Arabic (menu Options | Language).

Please report bugs to feedback@seeingwithsound.com, and visit the web page http://www.seeingwithsound.com/android.htm for detailed description and disclaimers. We are on Twitter at @seeingwithsound.

Thank you!

Is it an augmented reality game or a serious tool? It can be both, depending on what you want it to be! The ultimate goal is to provide a form of synthetic vision to the blind, but sighted users can simply have fun playing the game of sight-without-eyesight. Visually impaired users with severe tunnel vision can try if the auditory feedback helps them notice changes in the visual periphery. The vOICe for Android runs on smartphones and tablets, but is also compatible with most smart glasses, using the tiny camera in these glasses and a special user interface to generate a live sonic augmented reality overlay, hands-free! You may want to use an external battery connected via USB cable to keep the smart glasses battery from draining too quickly. You can help us by blogging and tweeting about your experiences, your use cases, and about how *you* learn to see with sound.

How does it work? The vOICe uses pitch for height and loudness for brightness in one-second left to right scans of any view: a rising bright line sounds as a rising tone, a bright spot as a beep, a bright filled rectangle as a noise burst, a vertical grid as a rhythm. Best used with stereo headphones for the most immersive experience and most detailed auditory resolution.

Just experiment with simple visual patterns first, because real-life imagery is extremely complex. Randomly drop a bright item such as a DUPLO brick on a dark table top, and learn to reach for it through sound alone (close your eyes if you have eyesight). Next try and explore your own safe home environment, and learn to associate the complex sound patterns with what you already know is there. Sighted users can also use the app with Google Cardboard compatible devices through a swipe-down on the main screen to toggle the binocular view.

For serious users: learning to see with sound is like learning a foreign language or learning to play a musical instrument, really challenging your perseverance and brain plasticity. It may well be the ultimate brain training system, bridging the senses through artificial synesthesia. A general training manual for The vOICe (not specific to the Android version) is available online at

https://www.seeingwithsound.com/manual/The_vOICe_Training_Manual.htm

and usage notes for running The vOICe for Android hands-free on smart glasses are at

https://www.seeingwithsound.com/android-glasses.htm

Do not worry about the many options of The vOICe for Android: human eyes have no buttons or options, and The vOICe is similarly designed to perform its main function out-of-the-box, so you do not have to use any options to get going. Some of the most common options appear as you slowly slide your finger across the main screen.

Why is The vOICe free? Because our foremost goal is to make a real change by lowering barriers to use as much as we can. You will find that competing technologies cost upwards of $10,000 and yet have lower specs. The perceptual resolution offered by The vOICe is unmatched even by $150,000 "bionic eye" retinal implants (PLoS ONE 7(3): e33136).

The vOICe for Android supports English, Dutch, German, French, Spanish, Italian, Estonian, Hungarian, Polish, Slovak, Turkish, Russian, Chinese, Korean and Arabic (menu Options | Language).

Please report bugs to feedback@seeingwithsound.com, and visit the web page http://www.seeingwithsound.com/android.htm for detailed description and disclaimers. We are on Twitter at @seeingwithsound.

Thank you!

Updated on

Safety starts with understanding how developers collect and share your data. Data privacy and security practices may vary based on your use, region, and age. The developer provided this information and may update it over time.

Ratings and reviews

4.3

1.49K reviews

Anurag Korg

- Flag inappropriate

April 30, 2020

It's good but I feel there is scope for improvement. Can the image to sound conversion be made more accurate. The sample rate could be slowed down. As accuracy is more important than speed.can more tones and pitch variations be produced so that a blind person create a more detailed picture of the visual information. I really like this idea it is awesome, But I hope that you could make a better more accurate version of this. It can be real blessing and game changer for the blind.

36 people found this review helpful

Peter Meijer

April 30, 2020

Thank you Anurag. We are continuously seeking improvements. The vOICe app has options for half & double scan rate for trading off accuracy vs real-time. Experience tells that more tones is/sounds not necessarily better: The vOICe for Windows lets you experiment with that. Also cf. https://www.seeingwithsound.com/voicebme.html design considerations.

Jonathan Paul

- Flag inappropriate

- Show review history

December 19, 2023

Huge props to the developer for making such a tool available even though it is quite abstract. I'd love to see this adapted to use a Hilbert curve instead of a left-right scanning method. See video by 3blue1brown seeing with sound. Thank you

16 people found this review helpful

A Google user

- Flag inappropriate

December 30, 2018

First used app on Android 2 years ago and was very basic+ hard to use.Wow how it has improved,its not too difficult to learn and interpret basic changes of environment from sounds.If you had to rely on this 24/7 as sight impaired person im sure it would be big help once you begin to learn what the sounds and clicks represent.The spoken location, navigation color functions are good.Best this app used on 3D with headphones. maybe used on remote spec mounted camera would enhance this app greatly

37 people found this review helpful

What’s new

v2.82: Integrates two AI depth view models: keyboard shortcut key 'h' toggles "good" model (low CPU load), and capital 'H' toggles "best" model (high CPU load). AI depth view on/off via menu Options | AI depth view. Fix for broken Back button behaviors with Android 16+.

Everyone

Learn moreApp support

About the developer

Peter Bartus Leonard Meijer

feedback@seeingwithsound.com

Netherlands